Scientific fact-checking is vital for assessing claims in specialized domains such as biomedicine and materials science, yet existing systems often hallucinate or apply inconsistent reasoning when verifying technical claims against a provided evidence snippet. We present a modular pipeline centered on atomic predicate-argument decomposition and calibrated, uncertainty-gated corroboration.

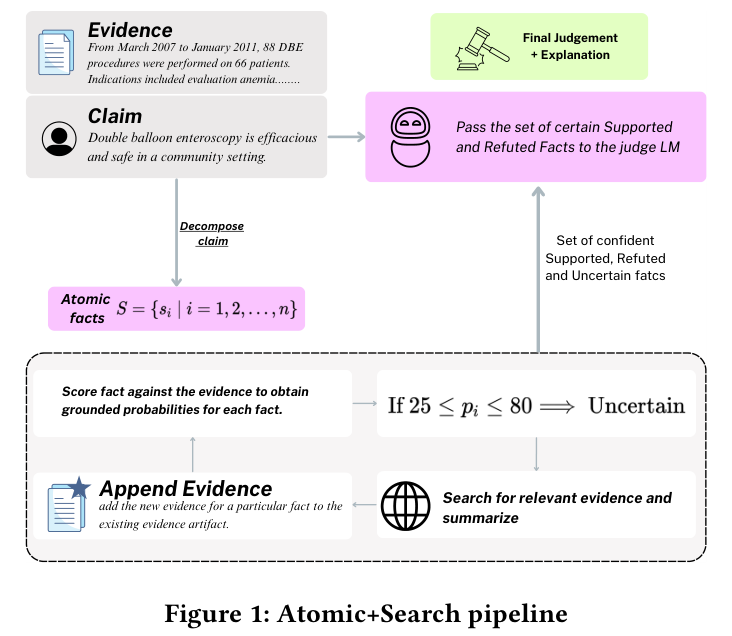

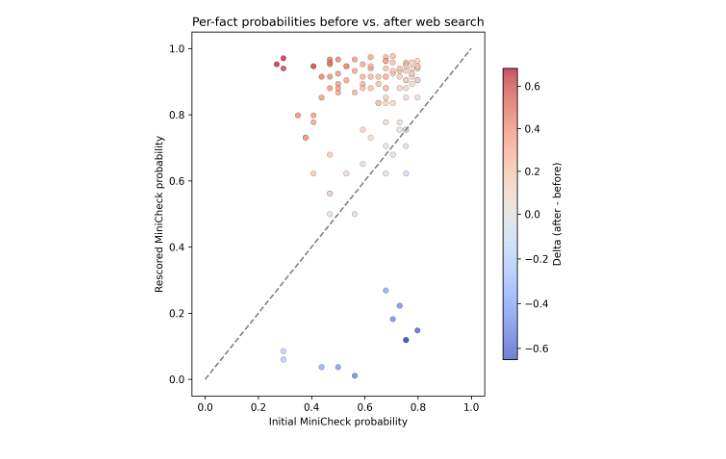

Claims are decomposed into atomic facts, aligned to local snippets, verified by a compact evidence-grounded checker, and only uncertain facts trigger domain-restricted web search over authoritative sources. The system supports both binary and tri-valued classification and abstains with NEI when retrieved evidence conflicts with the provided context.